Incident response and security operations center work demands rapid recognition of threats, accurate diagnosis, and decisive action under pressure. The discipline grew from an environment where human operators processed incomplete, ambiguous information and applied professional judgment to contain breaches. The skill of reading subtle anomalies in logs, correlating disparate indicators, and coordinating complex mitigation efforts defined the role. Human intuition, shaped through repeated exposure to incidents, once provided a competitive advantage over automated detection systems.

Artificial intelligence began to erode that advantage through advances in pattern recognition, anomaly detection, and predictive analytics. Early systems depended heavily on human tuning and suffered from false positives that required constant triage. Machine learning models improved signal-to-noise ratios, learning to correlate indicators without explicit rules. In parallel, automated response capabilities advanced from predefined playbooks toward adaptive orchestration, where systems execute countermeasures without waiting for human approval. This shift reduced the scope of decisions requiring direct analyst involvement.

Agentic AI introduces a further transformation by integrating perception, reasoning, and action in a persistent operational loop. Such systems ingest diverse data sources, build context dynamically, and pursue investigative threads without explicit tasking. They possess the capacity not only to detect and respond but to reconfigure defensive posture in anticipation of evolving threats. The feedback cycle shortens from hours to seconds, and interventions occur at a scale and speed impossible for human operators to match. Over time, such systems adapt to adversary behavior without human retraining, eroding the strategic advantage once held by human-led SOCs.

Human operators remain relevant in scenarios where political, legal, or strategic context alters the calculus of response. A machine may neutralize a threat effectively but fail to account for second-order consequences such as diplomatic repercussions, reputational damage, or regulatory violations. However, as AI systems integrate policy engines and scenario modeling, those contextual gaps will narrow. The decision-making layer, traditionally human-exclusive, will shrink further as machines learn to weigh operational outcomes against codified strategic priorities.

Organizational inertia and trust barriers will slow full automation. Many enterprises will retain human oversight for assurance and accountability, especially in regulated sectors. Nonetheless, the functional demand for large IR and SOC teams will diminish as AI achieves operational reliability. The surviving human roles will center on governance, exception handling, adversarial testing of the AI itself, and oversight of automated processes. These positions require a deeper understanding of threat models, system internals, and strategic objectives rather than traditional event triage or manual remediation.

The long-term trajectory favors AI dominance in operational security functions. Resistance to this transition will not halt it, as the performance, efficiency, and cost advantages of autonomous systems outpace the capabilities of human-centric operations. The profession will shift from direct engagement with incidents toward supervisory, strategic, and meta-analytical responsibilities, with far fewer practitioners needed to maintain security at scale. The displacement will mirror past industrial transformations where automation absorbed the majority of repetitive and reactive tasks, leaving humans in narrower but higher-order roles.

Agentic AI will not erase the need for security outcomes. Agentic AI will significantly alter the current work pattern that incident responders and SOC operators perform. Pressure for speed, coverage, and cost drives the shift. Autonomy that perceives, reasons, and acts on threats at machine speed changes the center of gravity from manual triage toward continuous machine decision-making with human governance. A durable forecast requires structured reasoning, not hype. The following model integrates threat dynamics, enterprise constraints, regulatory demands, and learning system behavior across time.

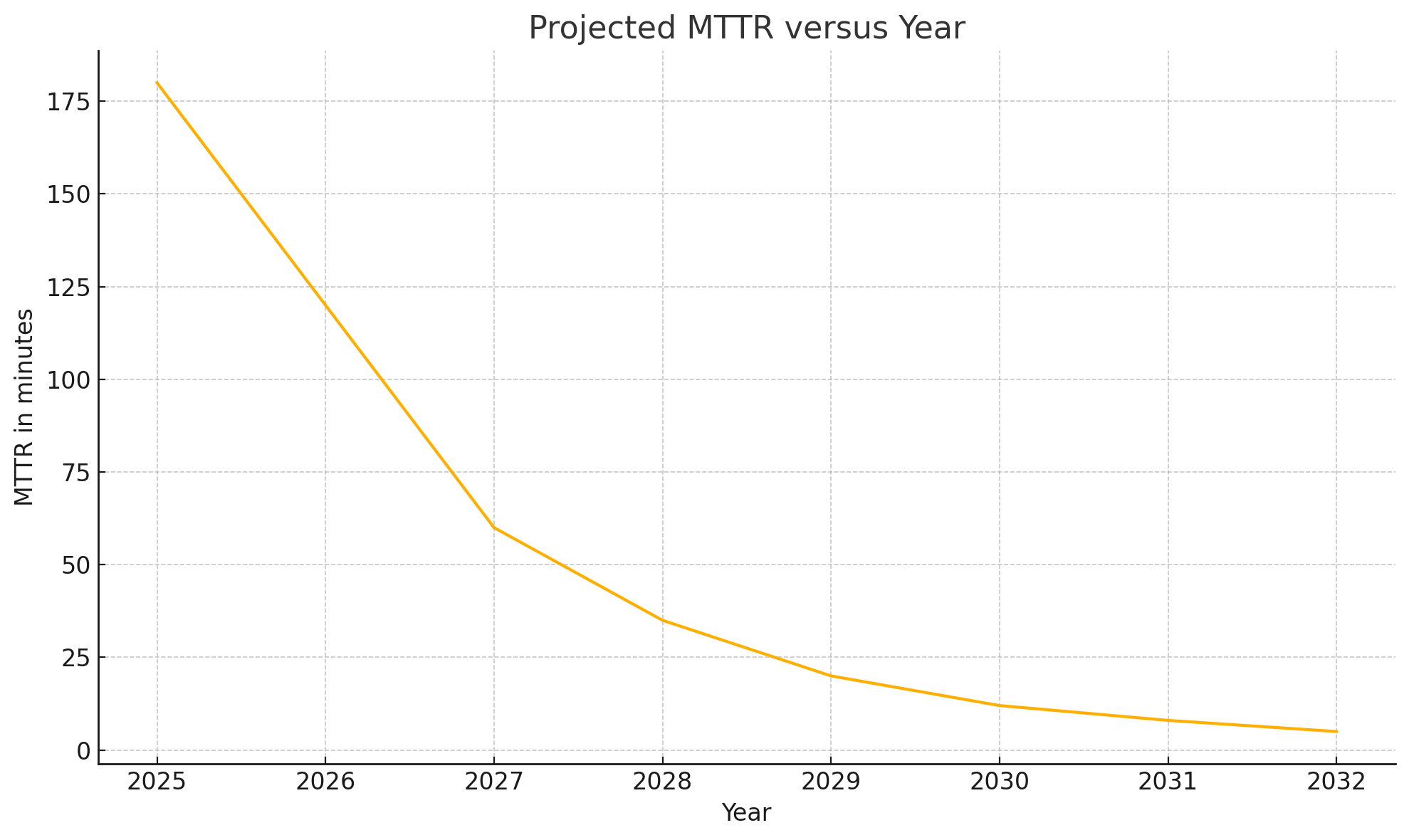

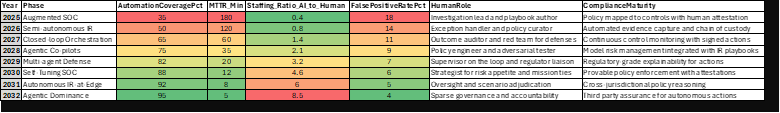

Foundations in 2025 favor augmentation over autonomy. Models rank alerts, stitch weak signals, and recommend actions across EDR, NDR, identity, and SaaS control planes. Human staff still author playbooks and adjudicate impact for business processes. Performance gains concentrate in three areas. Mean time to detect falls as models fuse telemetry across hosts, network, identity, and cloud. Mean time to contain improves as orchestrators standardize responses and pre-stage access and keys. False positive burden falls as models learn negative examples from closed tickets and suppression rules. Staffing patterns begin to change. Fewer entry-level analysts screen alerts. More policy engineers craft the rules of engagement and acceptance criteria for action.

Acceleration in 2026 through 2027 shifts incident response into closed-loop operations. Systems run multi-step investigations, fetch fresh evidence, branch probes, and compare outcomes to historical playbooks. Confidence scoring and rollback mechanisms create a safety rail. Human roles move toward exception handling, model supervision, and control mapping. MTTR falls from hours to one-hour windows for commodity intrusion sets. Adversaries adapt. Payload mutation and cross-domain pivots seek blind spots across SaaS, OT, CI, and CD, and identity federation. Agentic stacks answer with active learning that writes and tests new hypotheses against live telemetry. Value concentrates on model governance and red teaming of the defense stack rather than manual scoping of single hosts.

A qualitative break emerges in 2028 through 2029 as multi-agent defense becomes standard at scale. Specialized agents coordinate collection, reasoning, and action. One agent hunts credential misuse in identity graphs. Another agent checks lateral movement with access path modeling. A third agent simulates blast radius under different response plans and proposes the cheapest plan that preserves mission outcomes. Coordination and deconfliction among agents becomes a complex problem. Enterprises adopt policy engines with machine-readable constraints such as regulatory zones, privacy boundaries, and safety invariants for production systems. Human oversight narrows to policy definition, risk appetite setting, and adversarial testing of the agents. SOC floors shrink. A few senior operators supervise many agent workflows through outcome dashboards and cause-of-action traces.

Maturity in 2030 through 2031 turns autonomy from fast reaction into preemptive defense. Agents learn adversary doctrine, not only signatures. Campaign-level reasoning links cloud abuse, vendor compromise, and third-party identity fraud into one picture with forecasted next steps. Controls shift from static blocklists toward ephemeral guardrails that expire with context. Evidence chains gain cryptographic signing and time stamping for audit. Regulators accept machine action when systems record policy reasoning, simulation evidence, and rollback states. Human staff focus on scenario adjudication where law, diplomacy, safety, or mission readiness override a purely technical optimum. Most manual containment disappears outside of safety-critical environments.

Dominance arrives in 2032 when agentic stacks own investigation and containment for the vast majority of cases. Human roles condense into sparse governance, post-incident accountability, purple teaming against the agents, and crisis leadership for the few situations where machine action conflicts with business or state imperatives. Incident response as a craft survives only at the edges. Enterprises still need judgment. Enterprises no longer need large teams for routine events.

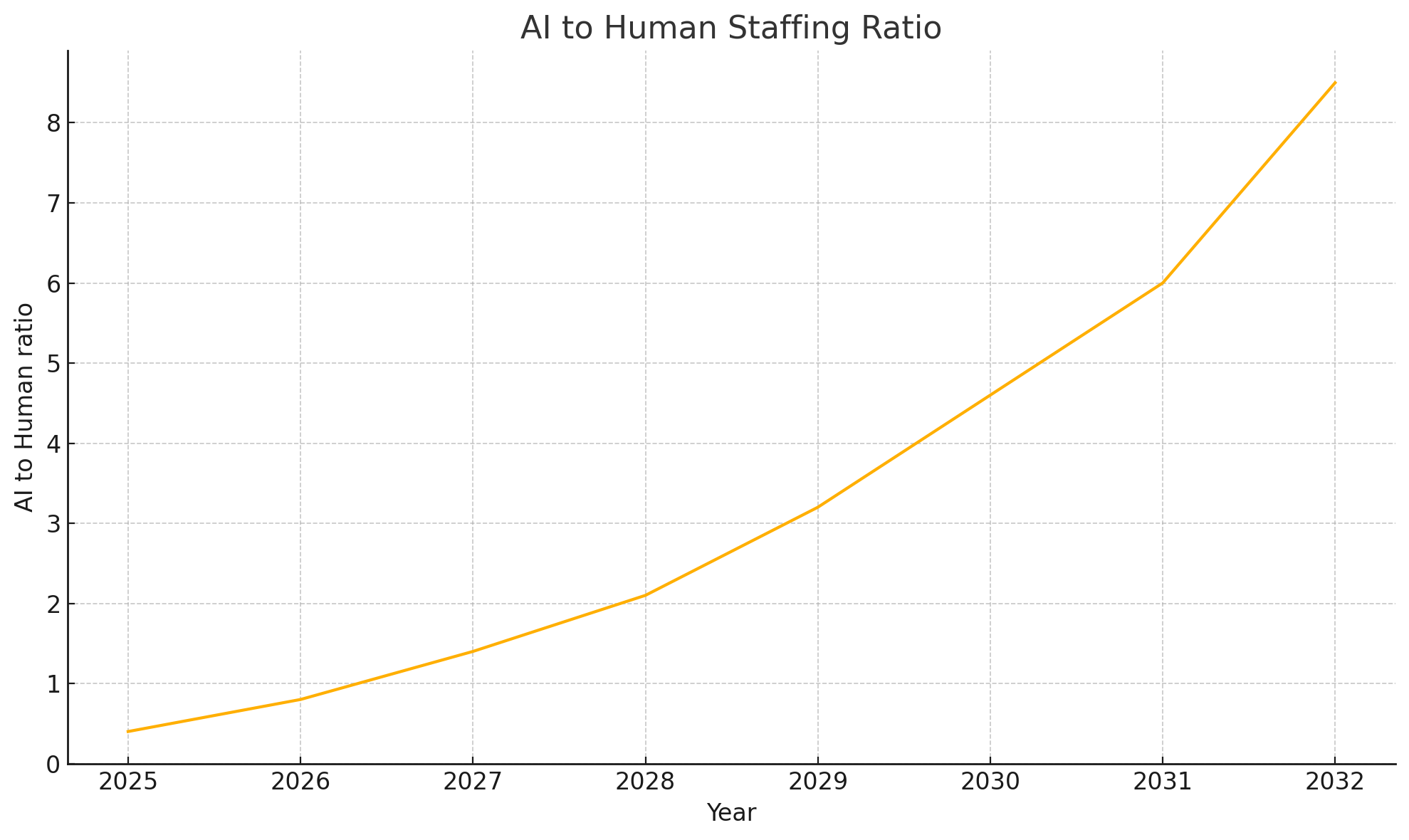

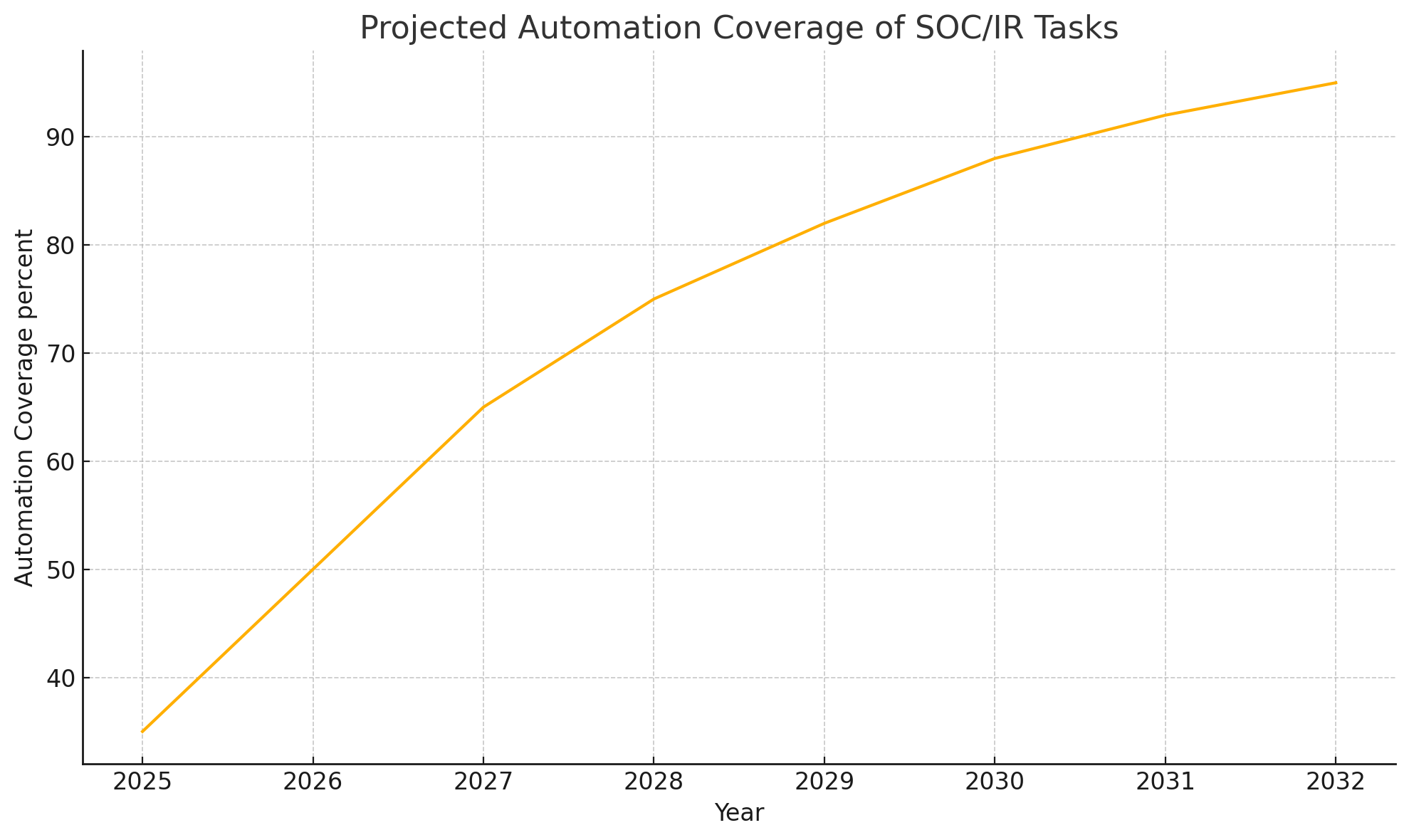

A timeline with measurable indicators anchors the forecast. The table shared above defines year, phase, automation coverage, MTTR, false positive rate, staffing ratio, and compliance maturity. The charts visualize MTTR decline, automation coverage growth, and the rise of the AI-to-human staffing ratio. Projections show automation coverage rising from mid-thirties in 2025 to mid-nineties in 2032. MTTR falls from three hours to minutes. The staffing ratio flips from human leadership to machine leadership during the 2027-2029 window.

Reasoning for each inflection point follows. Data scale favors machine perception. Log volumes, identity graphs, and cloud events exceed human review capacity by orders of magnitude. Action bandwidth favors machine execution. Orchestrators touch thousands of endpoints and services at once with sealed credentials and pre-approved changes. Learning dynamics favor reinforcement signals drawn from ticket outcomes and rollback success. Compliance acceptance favors recorded reasoning traces, model cards, an inventory of data sources, and test coverage. Adversary adaptation favors agents that spin new detectors in hours instead of quarterly rule cycles.

Risks and constraints temper the forecast. Model error and overconfidence can cause harm. Safety rails and reversible actions reduce downside. Supply chain integrity for models and prompts becomes a new attack surface. Separation of duties, artifact signing, and provenance tracking reduce risk. Policy brittleness can stall machine action in edge cases. Policy simulators and continuous validation reduce brittleness. Workforce disruption will arrive unevenly across sectors. High assurance domains retain more human decision points for more extended periods. Low-friction SaaS-heavy enterprises move faster toward autonomy. None of the constraints overturn the direction of travel under realistic assumptions for cost, speed, and accuracy.

A strategy for SOC and IR leaders during the transition requires decisive retooling. Training pivots from manual triage toward policy engineering, test design, adversarial evaluation, and failure analysis. Metrics pivot from ticket closure counts toward outcome quality, safe action rates, and simulated loss avoided. Governance pivots from static change windows toward guardrails encoded as constraints that the agents must honor. Procurement pivots from boxed tools toward platforms that expose reasoning traces, action provenance, and rollback assurance. Career paths pivot toward risk, trust, and safety disciplines with strong ties to red teams and legal counsel. Organizations that move early compress cost, shrink dwell time, and harden against fast-moving campaigns. Organizations that delay will face rising loss given breach as attackers embrace their agentic systems for discovery, privilege escalation, and lateral movement.

A final judgment follows with no hype. Obsolescence will strike tasks, not missions. Threat response missions remain. Human labor that once shouldered detection and containment will contract sharply as agentic systems outpace human speed and scale. A smaller cadre of practitioners will lead policy, assurance, and crisis action with more authority and higher stakes. Preparation that starts now avoids painful dislocation and positions security as an engineered control system rather than an endless queue of alerts.

References in APA format

ENISA. 2023. Artificial Intelligence Threat Landscape 2023. European Union Agency for Cybersecurity. https://www.enisa.europa.eu/publications/ai-threat-landscape

MITRE. 2021. D3FEND technical knowledge base for cyber defense. MITRE Corporation. https://d3fend.mitre.org

National Institute of Standards and Technology. 2012. Computer Security Incident Handling Guide Special Publication 800-61 Revision 2. U.S. Department of Commerce. https://doi.org/10.6028/NIST.SP.800-61r2

National Institute of Standards and Technology. 2023. Artificial Intelligence Risk Management Framework 1.0. U.S. Department of Commerce. https://doi.org/10.6028/NIST.AI.100-1

Weidinger, L., Mellor, J., Rauh, M., Gabriel, I., et al. 2022. Taxonomy of Risks posed by Language Models. arXiv. https://arxiv.org/abs/2112.04359

Zhang, J., Bommasani, R., Li, P., Liang, P. 2024. Agents that Matter toward Reliable Agentic Systems. arXiv. https://arxiv.org/abs/2407.01502

You must be logged in to post a comment.