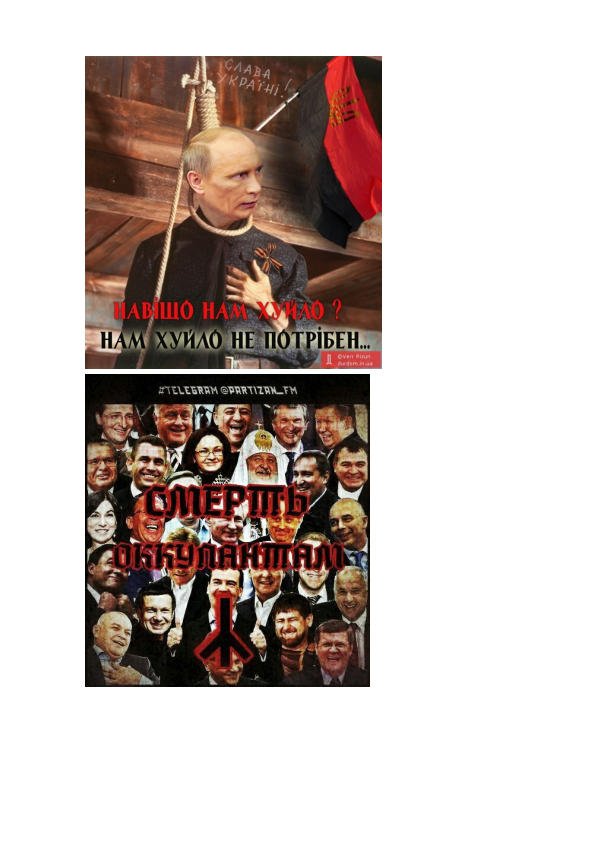

The internal annex to the terms of reference for Oculus specifies what violations it should find in pictures and videos on the Internet. In addition to information about terrorism, drugs, and suicide methods, the system should detect calls for rallies and their approval, “justification, calls for the violent overthrow of power,” as well as insults to the president (“phototoads, demotivators, cartoons, cartoons, sexual intercourse”), obscene vocabulary in relation to him and “comparing the president to negative characters and condemning activities (eg Hitler, werewolf, dictator, racist, traitor)”.

The document notes that paragraphs related to “justifying and calling for the violent overthrow of power”, insulting the president and accusing him of extremism, were added to the document on February 17, 2022 – a week before the start of a full-scale Russian invasion of Ukraine.

Also on the list of violations are “demonstration of the attractiveness of the image of representatives of LGBT culture” and “images of persons that do not correspond to the traditional image of a man and a woman (for example, masculine female faces, men with makeup).”

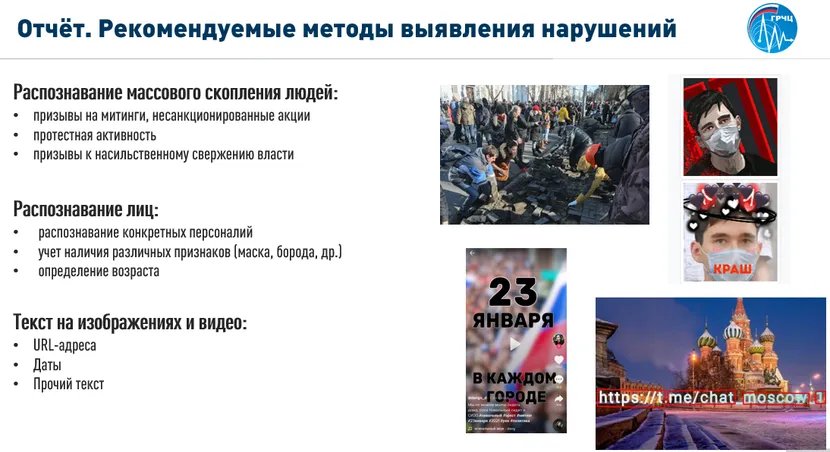

In internal presentations dedicated to the Oculus, it is the recognition of protest activity that is indicated by the main goal of its creation.

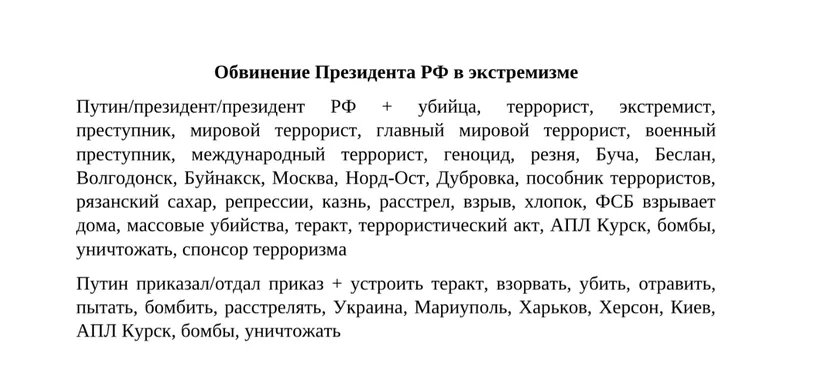

In September 2022, an employee of the monitoring department sent a folder “Materials on the Oculus” to a colleague. It contains examples of photo- toads with Putin and mentions that you need to track pictures not only with him, but with all members of the government. There was also found a dictionary, with the help of which it is necessary to automatically recognize, for example, the accusation of the president of extremism and the approval of the overthrow of power.

The leak does not contain information about the connection of the Oculus. Judging by the correspondence of the employees of the GRCHTS, in the summer of 2022, the employees were actively marking up data sets for training the Oculus neural network and even were on duty during the holidays for this.

Files located at the end

At the end of February 2022, the head of the scientific and technical center Alexander Fedotov and the head of the analysis department Roman Korostashov demonstrated the Oculus model. According to their statements, the system recognized, for example, cuts on the wrists, prohibited symbols, catching ( moving on a train outside, clinging to the car by stairs, footboards, etc. – Ed. ) and identified the person in the mask. They did not show any results related to the identification of protest activity.

According to the plans of the GRFC, by 2024, Oculus must learn to classify actions not only in photos, but also in videos – again, recognize rallies, as well as really life-threatening actions: self-harm (cuts, strangulation), catching, shooting in schools or mass fights. The leak documents make no mention of advances in video recognition.

The plans of the RKN also include “recognition of complex multimodal media materials” – posters, comics and memes, since they may contain prohibited information “both directly and indirectly.” But at the same time, the authors admit that this is difficult, since “automated monitoring using AI [artificial intelligence] requires a contextual understanding of Internet culture: recent events, political views, cultural beliefs, since memes often refer to other memes or other online events” . The HRCC plans to complete research on how to look for violations in memes only in 2024.

“At least 100 violation cards per day.” How existing monitoring systems work

Now the employees of the GRFC daily monitor all social networks, media and websites both manually and with the help of several programs. Some are responsible for the media, others for social networks and websites.

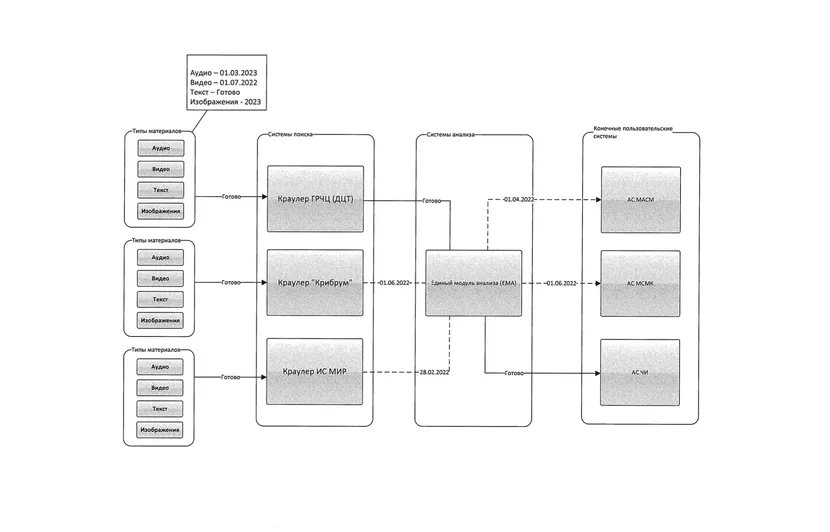

For the media , an automatic system for monitoring the means of mass communications (AS MSMK) is used. The list of monitored media comes from Roskomnadzor.

It follows from the leaked documents that AS MSMK finds potential violations by keywords on various topics (suicides, extremism, calls for rallies, “fakes” about the war in Ukraine, “foreign agents” and others). Every day the system generates an array of cards with alleged violations. The operator reviews articles and comments and decides if they contain violations. And if there is, then the operator registers them, and if not, rejects the card. Cards accepted by the operator with confirmed violations automatically go first to the examination department of the GRFC, then to Roskomnadzor.

Dictionary analysis is inaccurate and “requires high labor costs” due to the fact that operators have to manually cross-check a lot of materials , admits the GRFC. From the reports that new employees fill out at the end of the probationary period, you can estimate the amount of work. Thus, an information analysis specialist reported in July 2022 that she had drawn up at least 100 cards of suspicions of violations per day and manually entered at least 40, and also managed to monitor the Internet “to identify banned anime films.”

From 2022, the system automatically receives not only text content, but also transcriptions of radio and television broadcasts.

For surveillance of social networks, an automated system for monitoring and analyzing social media (AS MASM) is used. Since 2022, it has been merged with the Clean Internet (AS CHI) system, which censors Yandex search results.

As in the case of the media, in social networks, some violations are searched for manually, some automatically, followed by human verification. For example, MASM automatically searches for materials related to “fake news” about the war in Ukraine and anti-war rallies.

Violations are automatically monitored only in the social networks VKontakte, Odnoklassniki, My World, Answers Mail.ru, LiveJournal and Youtube. The rest of the social networks – Instagram, Facebook, Twitter, Tiktok, Telegram, Rutube – are monitored manually by the GRFC staff and are only planning to introduce automation.

To do this, the RKN was going to conclude a contract with the Kribrum company of Natalia Kasperskaya and Igor Ashmanov from June 2022, which cooperate with the Russian authorities, support censorship and the war in Ukraine. Read more about Kribrum and other companies involved in censorship in the drop-down text

As a result, all projects for the analysis of materials and the media, and social networks, and search results, which are being developed by Roskomnadzor, are planned to be merged into a single system, at the center of which is the Unified Materials Analysis Module based on artificial intelligence. Here is how this system looks in the diagrams. You can download and view them in detail here and here.

The leaked documents show that Roskomnadzor’s plans for total censorship of the Internet using artificial intelligence are still very far from being realized. But it is obvious that as new functions and systems are introduced, the scale of surveillance of those who dare to speak out in a way that is not beneficial to the Russian regime will grow.

“Great opportunity to cut the budget”

The GRFC holds expert councils on artificial intelligence several times a year. Representatives of the industry, scientists, and officials gather and make presentations at them. We spoke on condition of anonymity with one of the participants of such councils, an expert in the field of machine learning.

He said that these councils can be considered “something educational and educational for an internal audience”, where industry experts give reports on technologies and developments, government representatives “tell how cool they are, mentioning the most fashionable words of the season [like ‘artificial intelligence'” , “neural networks”, “computer vision” and others], and the management is inspired and gives the budget.

According to our interlocutor, the dreams of the GRFC to introduce total censorship based on artificial intelligence are theoretically feasible, but it is unreasonably expensive. “To do this, you need to build several teams: data collection and labeling, monitoring teams, engineering teams, managers, and many others. And of course, to provide it with our own data center with the latest video cards (expensive). A great opportunity to cut the budget. This approach does not compete with the alternative: to imprison several hundred moderators for a penny so that they manually monitor social networks.

For example, the task of finding images that offend Putin alone would require a lot of resources. “It’s easy to develop a simple classifier inside VKontakte that determines that there is a president in the picture, and the picture has a meme context (with captions and other things), and it’s done by VKontakte’s internal forces,” the expert continues. “But in order for this to work constantly at the level of the entire social network, a significant part of the VKontakte team needs to be diverted to this task. To turn this into an industrial solution that works with a large list of social networks, instant messengers, and websites is, rather, a reason to knock out an even larger budget. A budget that will go where it will.”

The Vepr project, which is supposed to predict future “information threats” and protest moods, causes particular skepticism: “I would not worry too much that such a system would be implemented. Our industry has such low-hanging fruits as online advertising optimization. Multi-million dollar profits are promised to those who can at least slightly optimize such a mundane task. They want a forecast of the development of the social situation based on posts on the social network. It seems that tossing a coin will be more effective than the predictions of such a system.”

Comment on “Roskomnadzor plans surveillance of the entire Russian-speaking Internet using artificial intelligence”

Comments are closed.