Generative AI builds hypotheses at scale, while agentic AI pushes those hypotheses through structured cycles of inquiry, testing, refinement, and decision support. Intelligence programs gain significant advantage when both layers integrate with disciplined tradecraft frameworks such as those taught through Treadstone 71 and the Cyber Intelligence Training Center. A deeper look shows how each layer strengthens modern analytic operations.

Generative models create wide hypothesis fields from sparse clues. Analysts gain access to rapid pattern expansion, narrative mapping, and structured scenario branching. A generative engine produces first-order interpretations, alternate explanations, and falsifiable storylines that align with structured analytic techniques. Treadstone 71 programs already train analysts to map hypotheses, examine assumptions, interrogate evidence, and forecast futures. Generative AI accelerates each step while still requiring human adjudication, STEMPLES² Plus cross-domain reasoning, and disciplined sourcing.

Agentic AI introduces autonomy. An agent plans tasks, breaks them into actions, retrieves data, applies analytic workflows, and evaluates outcomes against specified standards. A mature agentic system can run structured analytic techniques such as Analysis of Competing Hypotheses, Indicators of Change, or Red Team hypothesis challenges without analyst prompting. Strong governance prevents drift as agents follow procedural logic consistent with Treadstone 71 doctrine.

A combined system supports intelligence teams in ways that strengthen rigor rather than weaken it. A generative layer widens the hypothesis field. An agentic layer navigates each hypothesis through structured cycles anchored in tradecraft. Analysts focus on judgment, validation, deception detection, and narrative warfare implications.

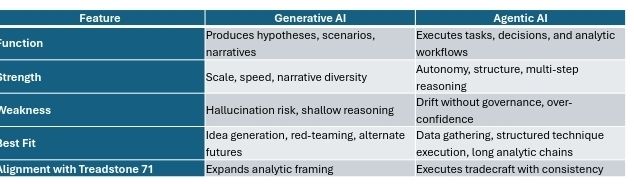

A comparison helps frame the functional differences.

A combined architecture strengthens intelligence analysis. Generative output feeds agentic workflows that test assumptions, confirm evidence, detect deception, and refine scenarios. Agents monitor indicators connected to PIRs, assess narrative shifts, and trigger alerts when thresholds align with adversary patterns. A full cycle produces validated products aligned with tradecraft standards taught through Treadstone 71.

A forward-leaning intelligence program merges three layers: analysts, generative models, and agents. Analysts direct reasoning. Generative models explore possibility space. Agents execute structured analytic tasks. Cognitive warfare environments demand systems that process large inputs, test stories quickly, and adapt while preserving analytic rigor. A disciplined architecture built around Treadstone 71 methodologies delivers that outcome.

Further expansions can deepen the integration of generative and agentic systems into cognitive warfare detection, OSINT automation, and counterintelligence workflows described at http://www.treadstone71.com and cyberinteltrainingcenter.com/p/featured.

You must be logged in to post a comment.