The landscape of strategic influence has shifted from the mere dissemination of “fake news” to a sophisticated paradigm of high-fidelity mimicry. This evolution, epitomized by the Russian-linked Operation Doppelgänger, reached a critical juncture during the October 2025 Czech parliamentary elections. The operation no longer relies solely on the volume of falsehoods but rather on the precision of its cloning techniques, which replicate the digital aesthetic and institutional authority of legitimate media outlets and government bodies. By hijacking the visual and structural language of trusted journalism, the actors behind Doppelgänger seek to erode the very foundations of institutional trust, creating a cognitive environment where the distinction between authentic reporting and state-sponsored subversion becomes indistinguishable to the casual observer. This shift represents a move toward what is defined in professional tradecraft as cognitive warfare—the art of using technology to alter the cognition of human targets, who are often unaware of the attempt.

The Strategic Shift Toward Mimicry

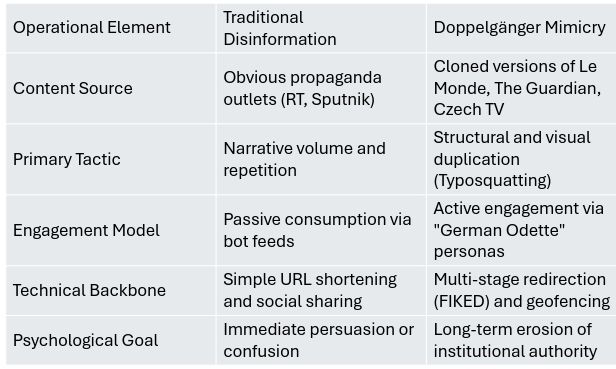

The historical context of Russian information operations reveals a trajectory from the “Firehose of Falsehood” model toward the current “Doppelgänger” model. In the early 2020s, the primary tactic was volume; by saturating the information space with conflicting narratives, actors could create a “truth decay” that paralyzed decision-making. However, as audiences became more attuned to low-quality disinformation, the adversary adapted. The 2025 Czech election served as a laboratory for this new doctrine, where the focus transitioned from content to context. Mimicry allows an operation to bypass the initial skepticism of the user by wrapping hostile narratives in the skin of reputable institutions.

This evolution necessitates a deeper understanding of the human element in cybersecurity. The Treadstone 71 perspective emphasizes that cybersecurity is not merely about computers and code; it is fundamentally about the people who interact with them. Even the most robust technical defenses can fail if the human component is successfully manipulated through high-fidelity mimicry. Understanding these tactics is central to the mission of modern intelligence training, as detailed at https://www.treadstone71.com/training/the-mission, which focuses on turning analysis into actionable defense.

Actor Profiles and Organizational Structure

Operation Doppelgänger is not a decentralized effort but a coordinated campaign involving specific Russian entities sanctioned for their roles in international subversion. The primary actors identified in these operations include the Social Design Agency (SDA), also known as ASP, and the company Struktura. These firms operate under contracts that often involve the creation of “augmented reality” tools—a term they use to describe large-scale disinformation systems designed to elicit visceral emotional reactions through fabricated documents and videos.

The organizational structure of these entities mirrors that of professional advertising and psychological operations units. They utilize data-driven approaches to monitor engagement across platforms like Facebook, YouTube, Telegram, and TikTok. The actors have demonstrated a high level of sophistication in their ability to manage thousands of “burner” accounts and ad-tracking instances to maintain operational security.

Social Design Agency (SDA/ASP): Leads psychological strategy and narrative creation, focusing on audience segmentation, emotional triggers, and cognitive mapping.

Struktura: Responsible for infrastructure management and domain technicalities, including web cloning, typosquatting, and bot network automation.

Argon Labs: Provides technical auxiliary support and obfuscation, managing proxies, IP rotation, and fingerprinting evasion.

Aeza Group: Offers bulletproof hosting and infrastructure resilience, providing servers resistant to Western takedown requests.

The leadership of these organizations often displays traits consistent with the “Pitch-Black Tetrad”—a combination of Machiavellianism, Narcissism, Psychopathy, and Schadenfreude. In the intelligence tradecraft, understanding these behavioral profiles is essential for predicting adversary moves and designing effective counter-measures. This level of analysis is a core component of advanced training programs, such as those found in the https://www.treadstone71.com/training/p-omega-syllabus, which integrates psychology and sociology into cyber intelligence.

Technical Orchestration: The FIKED Redirection Model

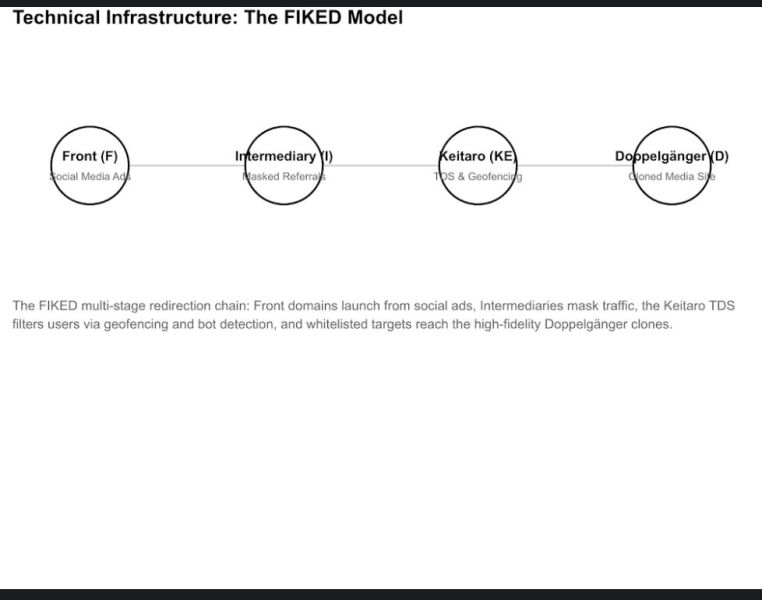

The technical architecture behind Operation Doppelgänger is designed to achieve three main goals: bypassing content moderation, geofencing the target audience, and tracking campaign effectiveness. Central to this is the FIKED model, a four-stage redirection chain that masks the true nature of the operation from platform moderators and international researchers.

The first stage involves Front domains (F), which are expendable URLs advertised on social media, particularly X. These lead to Intermediary domains (I) that handle the initial referral logic, ensuring that the traffic appears organic to the final destination. The most critical node is the Keitaro domain (KE), which hosts a traffic distribution system (TDS). This system uses complex logic to filter visitors:

Geofencing: The system checks the visitor’s IP address against a whitelist of target countries. For the 2025 Czech operation, this was restricted to Czech-based IPs.

User-Agent Analysis: It identifies and blocks known researcher tools, VPNs, and bot scrapers to prevent detection by the cybersecurity community.

Redirection Logic: Whitelisted users receive a 302 redirect to the final Doppelgänger content, while non-targets receive a 200 status code and remain on a benign, parked page.

The final stage is the Doppelgänger domain (D), which hosts the cloned content. These sites are often registered using typosquatting on alternative extensions like .ltd, .online, or .foo. The clones are visually indistinguishable from the target media outlets, replicating CSS, JavaScript, and branding to an exacting degree.

This infrastructure allows for “narrative laundering,” where a story begins on an anonymous blog, is amplified by bots, and eventually ends up on a site that looks like a reputable newspaper, thereby gaining an undeserved veneer of credibility. Mastering the deconstruction of these technical chains is a prerequisite for any professional looking to build a resilient defense, a topic explored in depth at https://www.treadstone71.com/training/building-a-cognitive-warfare-cyber-psyops-program.

The Human Vector: “German Odettes” and Persona Tradecraft

The social layer of Operation Doppelgänger utilizes a tactic known as “German Odettes”—a network of sophisticated fake personas designed to bridge the gap between cloned sites and the general public. These profiles do not merely broadcast content; they participate in legitimate online communities to steer discourse toward the operation’s narratives.

A typical Odette persona claims employment at a high-profile Western company, such as Netflix, to establish an immediate baseline of normalcy and authority. They do not engage in aggressive propaganda. Instead, they utilize “information alibis”—selective truths or “common-sense” grievances—to subtly shift the conversation. In the Czech context, these personas would comment on mainstream Facebook pages, expressing concern about inflation or the risk of war, and then provide a link to a cloned “reputable” site that validates those fears.

The psychological foundation of this tactic relies on several cognitive biases:

Social Proof: By engaging in the comments section, the personas create an illusion of public consensus around the anti-government narrative.

Authority Bias: The fake association with Western brands like Netflix reduces the user’s initial suspicion toward the commenter.

Availability Heuristic: By saturating the comment sections of popular news, they make the fabricated narrative seem more prevalent than it actually is.

Western Job Title: Establishes credibility via Authority and Normalcy Bias (e.g., claiming employment at Netflix).

Native Language Fluency: Avoids “foreign bot” markers to provide critical in-group validation.

Conciliatory Tone: Specifically designed to engage non-radical users by reducing cognitive dissonance.

Cross-Platform Presence: Reinforces the reality of the persona for sustained behavioral influence.

This focus on the human element is what Treadstone 71 calls People Intelligence (PEOPINT). It requires an understanding of digital psychology and the ability to predict how human targets will react to specific triggers. This is part of the mobilization of the https://www.treadstone71.com/cognitive-army, where the goal is to equip defenders with the skills to identify these manipulative personas before they can take root in the public consciousness.

Case Study: The 2025 Czech Election Narratives

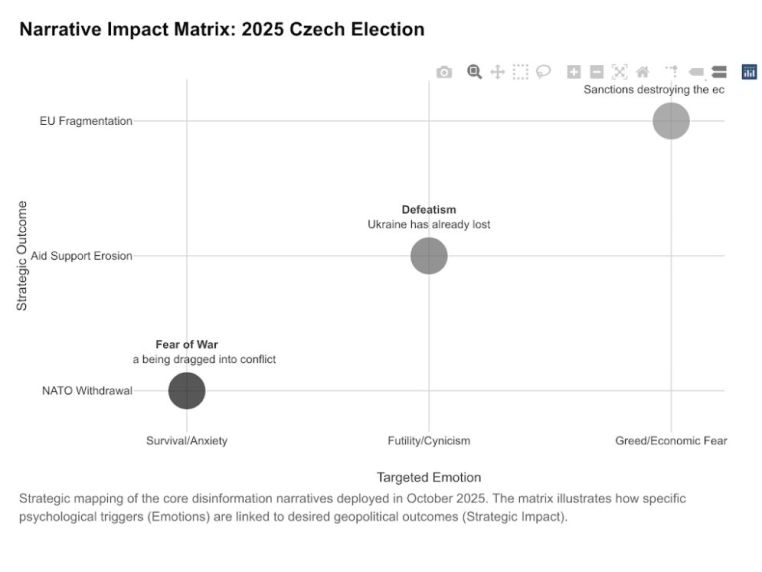

In October 2025, the Czech Republic experienced an unprecedented surge in localized mimicry. Data analysis showed that disinformation content reached a peak of 120 articles per day, often derivative of state propaganda but tailored for local consumption. The operation focused on three core narratives designed to exploit existing societal fissures, such as economic anxiety and post-pandemic distrust.

Narrative 1: “Czechia is being dragged into war”

This narrative aims to spark fear among the population, particularly parents and military-age males, by suggesting that government support for Ukraine will lead to direct kinetic conflict. It leverages the historical trauma of the “Munich betrayal” by framing the current government as elites who are sacrificing the Czech people for foreign interests. The cloned sites hosted fabricated “internal memos” from the Ministry of Defense suggesting upcoming conscription.

Narrative 2: “Ukraine has already lost”

By promoting defeatism, the operation seeks to make continued Czech aid seem like a futile waste of national resources. This narrative uses “information alibis”—selective military setbacks or corruption scandals in Ukraine—to suggest that the conflict is unwinnable and that support is merely prolonging the inevitable suffering of Czech taxpayers.

Narrative 3: “Sanctions are destroying the Czech economy”

This narrative targets the working class by shifting the blame for inflation and energy costs from the Russian invasion to the European response. Cloned financial news sites published “expert reports” claiming that the Czech industrial base would collapse within months if sanctions were not lifted, specifically highlighting the impact on energy-intensive sectors like automotive manufacturing.

The cumulative effect of these narratives is not just to influence a single vote but to create a state of “cognitive attrition”. When citizens can no longer trust the information from their own ministries, they become paralyzed, leading to the erosion of institutional trust and the weakening of the democratic social contract.

Cognitive Warfare: Combat Without Fighting

The Treadstone 71 framework defines cognitive warfare as “the art of using technology to alter the cognition of human targets”. This embodies the Sun Tzu-esque ideal of “combat without fighting.” In the context of Operation Doppelgänger, the weapon is not a missile or a virus, but a narrative that has been engineered to resonate with the target’s existing worldviews and biases.

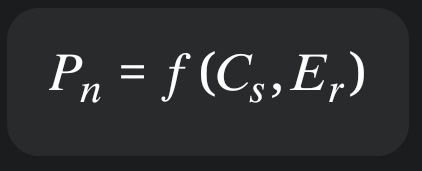

The operation utilizes “augmented reality” tools to create an alternative information ecosystem where the truth is obscured by layers of mimicry. This process can be modeled by the probability of a target accepting a narrative (P_n), which is influenced by the perceived credibility of the source (C_s) and the emotional resonance of the content (E_r):

By using cloned sites, the adversary artificially inflates C_s, while the specific Czech narratives maximize E_r. When these factors are optimized, the target is often unaware that their cognition is being manipulated, as they believe they are making a rational choice based on credible information.

This manipulation is further enhanced by AI. Generative models allow for the creation of “synthetic diversity”—diverse articles that avoid the repetitive phrasing of older bots, making it much harder for detection algorithms to flag coordinated activity. AI is also used for sentiment monitoring, allowing the operation to pivot its narratives in real-time based on the public’s emotional response.

Countermeasures: Building the Cognitive Army

Defending against the evolution of mimicry requires a move away from purely technical solutions. The mission of modern cyber intelligence is to build “societal resilience” through the integration of tradecraft, psychology, and technology. This involves several critical disciplines:

Narrative Intelligence (NARINT): The ability to deconstruct influence narratives and identify the “information alibis” used to hide deception.

Structured Analytic Techniques (SATs): Using formalized methods to externalize thought processes, challenge assumptions, and mitigate the cognitive biases that the adversary exploits.

Counterintelligence (CI): Detecting and disrupting the infrastructure of the operation—from identifying the Keitaro TDS instances to exposing the “German Odette” persona networks.

Operational Security (OPSEC): Maintaining anonymity while investigating these networks to prevent the adversary from identifying and retaliating against the defenders.

Training in these areas is essential for creating a “Cognitive Army”—a network of professionals who can spot the subtle inconsistencies in a cloned site or a fake persona before they can cause widespread damage. Programs such as the https://www.treadstone71.com/training/p-omega-syllabus provide the rigorous foundation needed to operate in this complex environment.

Future Outlook: The Deepening of the Grey Zone

As we look beyond the 2025 Czech elections, the tactics of Operation Doppelgänger are likely to become even more granular. We can anticipate the use of AI-driven voice cloning to create audio deepfakes, further enhancing the authority of the “mimic”. The operation will likely expand from mainstream social media to smaller, more fragmented platforms like Bluesky or specialized community forums, making detection even more difficult.

The core challenge remains the erosion of institutional trust. When the distinction between a legitimate government communication and a state-sponsored clone is lost, the social cohesion of the target nation is compromised. This is the ultimate goal of the “Grey Zone”—to achieve strategic dominance without ever firing a shot.

The evolution of mimicry in Operation Doppelgänger is a warning that our digital landscape is increasingly being weaponized. Protecting it requires a new kind of intelligence professional—one who is as comfortable analyzing a packet capture as they are deconstructing a psychological operation. The only durable defense is a proactive, intelligence-driven approach that anticipates the adversary’s moves and builds resilience from the ground up. This is the paradigm of the https://www.treadstone71.com/cognitive-army, where understanding people is the most powerful tool in the arsenal of cyber intelligence.

The Imperative for Advanced Tradecraft

The 2025 Czech election serves as a permanent case study in the risks of the “mimicry” phase of information warfare. The transition from crude “fake news” to high-fidelity clones signifies an adversary that is learning, adapting, and investing heavily in the psychological subversion of Western societies. To counter this, our own intelligence lifecycle must be equally dynamic.

Requirements: Focuses on narrative trends and psychological targets rather than just technical indicators like IPs and MD5 hashes.

Collection: Prioritizes the proactive hunting of Keitaro TDS instances and “Odette” personas instead of passive monitoring.

Analysis: Emphasizes structural and behavioral pattern analysis over simple content verification.

Production: Shifts to real-time narrative deconstruction and immediate alerts, replacing periodic threat reports.

Dissemination: Targets strategic leadership and public communicators alongside traditional IT security teams to build institutional resilience.

Mastering these skills is not a luxury; it is a necessity for the modern era. Training programs that address the intersection of AI, psychology, and technical tradecraft, such as those listed at https://www.treadstone71.com/training/building-a-cognitive-warfare-cyber-psyops-program, provide the roadmap for navigating this new frontier. By understanding the “why” behind the “how,” we can move from being reactive targets to proactive defenders in the ongoing cognitive war.

You must be logged in to post a comment.