Research remains the foundation of informed action. Whether guiding policy decisions, improving operational outcomes, or assessing adversary behavior, the method used to conduct research defines its reliability and interpretive strength. Analysts who rely on critical thinking must first understand how research is classified by nature and method before applying findings to intelligence production. Effective reasoning does not begin with assumptions. It begins with understanding how knowledge was constructed.

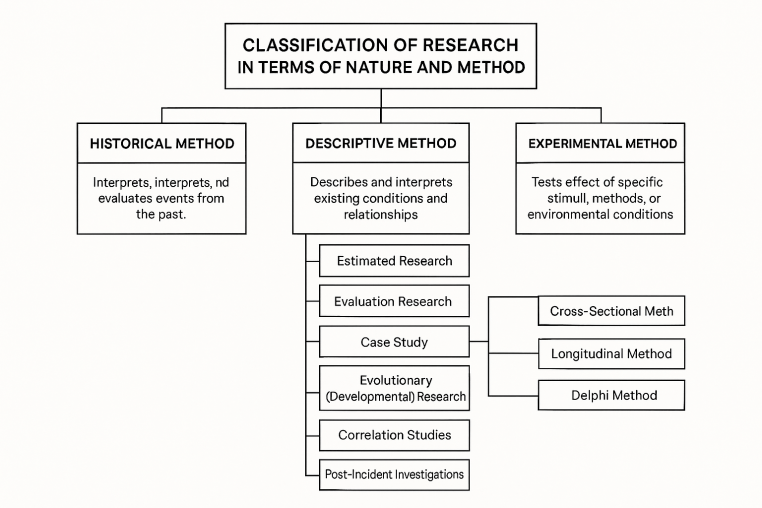

Classifying research by nature involves separating historical from descriptive and experimental approaches. Each method supports a different kind of intelligence requirement. Historical research interprets past events to identify context, precedent, and causality. Its strength lies in retrospective analysis. Analysts using historical data seek not just to report what occurred but to understand why. They connect fragmented sources and verify patterns through timelines, contradictions, and source triangulation. Historical method suits strategic forecasting and long-term trend analysis.

Descriptive research shifts the focus to current phenomena. It does not predict nor test interventions but observes, records, and interprets what exists. Within descriptive research, several subcategories exist that support practical intelligence tasks. Estimated research captures the present state of a phenomenon without testing relationships or building hypotheses. It works best for static snapshots where the goal is baseline understanding. Analysts often use this in preliminary reporting when mapping atmospherics or environmental baselines.

Evaluation research adds practical purpose. It assesses programs, decisions, or interventions to determine effectiveness. It supports post-operation reviews, cost-benefit assessments, or testing of narrative campaigns. Intelligence professionals applying this method produce findings that translate into actionable recommendations, yet they do not generalize beyond the evaluated instance. Evaluation’s utility lies in its application, not its abstraction.

The case study focuses on a single actor, cell, group, or community. Intelligence analysts often use this when tracking adversary units, key leaders, or enclave behavior. This method maps the full scope of variables influencing one target, and that detail produces robust behavioral profiles and operational context.

Survey studies examine distribution across a community. Most management-level research falls here, making it highly relevant for understanding sentiment, recruitment trends, morale, or response behavior. Cross-sectional surveys gather information at a single point in time and are often used for quick-turn assessments. Longitudinal surveys track changes over time. Intelligence professionals benefit from longitudinal studies when assessing strategic influence efforts, such as long-term shifts in public opinion following sustained propaganda. The Delphi method gathers expert consensus through structured rounds of questioning. Intelligence agencies often use Delphi for scenario testing or war gaming when uncertain futures demand structured expert judgment.

Evolutionary or developmental research focuses on change. It documents progress, regression, or adaptation in a process or population. This method becomes essential when tracking capability maturation, such as the progression of indigenous drone production networks or narrative development across adversary influence channels. It can be executed either as a continuous timeline or by studying different age or development groups in parallel.

Correlation studies explore relationships between two variables. Though causation remains unproven, correlation helps anticipate patterns. For example, a strong correlation between militia disinformation spikes and increased border tensions does not confirm one causes the other but does justify closer examination. Intelligence analysts often use correlation when testing multiple drivers of behavior, mapping indicators, or supporting predictive modeling.

Post-incident investigations reverse the logic. Rather than beginning with a cause and tracking its effects, they start with observed outcomes and trace back to probable causes. When a narrative shifts overnight in state-controlled media, analysts apply post-incident research to identify triggering variables such as sanctions, strikes, or leadership changes. These studies require careful source validation since the independent variables already exist and the research must not confuse proximity with influence.

Experimental research stands apart. It tests causality. Through random assignment, control, and manipulation, it determines the effect of one variable on another. Experimental design rarely suits intelligence field environments, yet simulation-based red teaming, controlled information injection into online ecosystems, or synthetic adversary modeling replicate experimental controls. When done well, experimental methods deliver some of the most reliable predictive insights. They demand high resource investment and face practical and ethical limitations, but they reveal what actually changes behavior under controlled conditions.

Analysts cannot produce accurate or timely insight without mastering the structure behind research findings. Misclassifying a descriptive study as causal, or reading correlation as certainty, degrades the entire analytic process. Intelligence work requires methodological precision. Before drawing conclusions or issuing warnings, every analyst must interrogate the nature of the research behind the data. What did the research measure? How did it measure it? Under what assumptions and limitations?

Effective reasoning begins with understanding method. Once analysts map the structure of research, they decode its meaning, spot its weaknesses, and apply it with purpose. Precision in method leads to clarity in conclusions. In intelligence, that clarity can mean the difference between foresight and failure.

You must be logged in to post a comment.